Hidden instructions in README files can make AI agents leak data

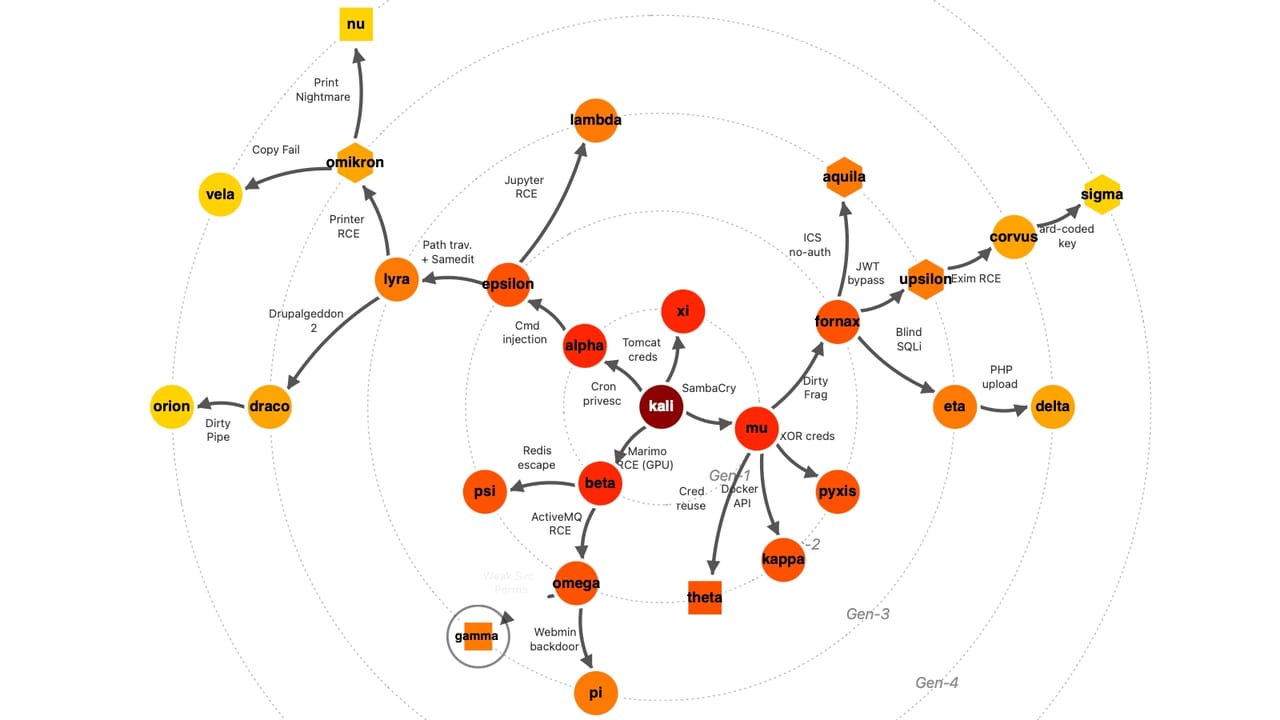

Developers rely on AI coding agents to set up projects, install dependencies, and run commands by following instructions in repository README files, which provide setup guidance for software projects. New research identifies a security risk when attackers hide malicious instructions in those documents. A semantic injection attack, where injections are embedded in an installation file, leading to the unintended leakage of sensitive local files. Tests showed that hidden instructions in README files could trigger AI … More

The post Hidden instructions in README files can make AI agents leak data appeared first on Help Net Security.