How Pokémon Go is giving delivery robots an inch-perfect view of the world

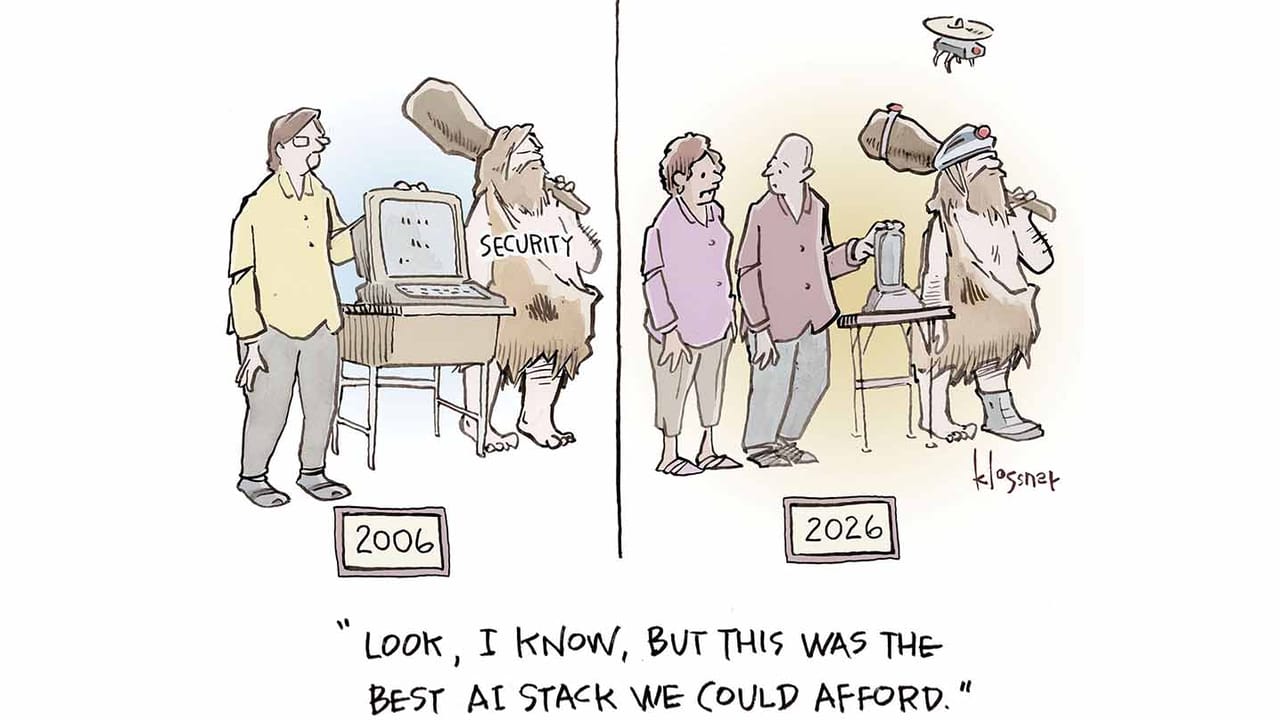

Pokémon Go was the world’s first augmented-reality megahit. Released in 2016 by the Google spinout Niantic, the AR twist on the juggernaut Pokémon franchise fast became a global phenomenon. From Chicago to Oslo to Enoshima, players hit the streets in the urgent hope of catching a Jigglypuff or a Squirtle or (with a huge amount of luck) an ultra-rare Galarian Zapdos hovering just out of reach, superimposed on the everyday world.

In short, we’re talking about a huge number of people pointing their phones at a huge number of buildings. “Five hundred million people installed that app in 60 days,” says Brian McClendon, CTO at Niantic Spatial, an AI company that Niantic spun out in May last year. According to the video-game firm Scopely, which bought Pokémon Go from Niantic at the same time, the game still drew more than 100 million players in 2024, eight years after it launched.

Now Niantic Spatial is using that vast and unparalleled trove of crowdsourced data—images of urban landmarks tagged with super-accurate location markers taken from the phones of hundreds of millions of Pokémon Go players around the world—to build a kind of world model, a buzzy new technology that grounds the smarts of LLMs in real environments.

The company’s latest product is a model that it says can pinpoint your location on a map to within a few centimeters, based on a handful of snapshots of the buildings or other landmarks in view. The firm wants to use it to help robots navigate with greater precision in places where GPS is unreliable.

In the first big test of its technology, Niantic Spatial has just teamed up with Coco Robotics, a startup that deploys last-mile delivery robots in a number of cities across the US and Europe. “Everybody thought that AR was the future, that AR glasses were coming,” says McClendon. “And then robots became the audience.”

From Pikachu to pizza delivery

Coco Robotics deploys around 1,000 flight-case-size robots—built to carry up to eight extra-large pizzas or four grocery bags—in Los Angeles, Chicago, Jersey City, Miami, and Helsinki. According to CEO Zach Rash, the robots have made more than half a million deliveries to date, covering a few million miles in all weather conditions.

But to compete with human couriers, Coco’s robots, which trundle along sidewalks at around five miles per hour, must be as reliable as possible. “The best way we can do our job is by arriving exactly when we told you we were going to arrive,” says Rash. And that means not getting lost.

The problem Coco faces is that it cannot rely on GPS, which can be weak in cities because radio signals bounce off buildings and interfere with each other. “We do deliveries in a lot of dense areas with high-rises and underpasses and freeways, and those are the areas where GPS just never really works,” says Rash.

“The urban canyon is the worst place in the world for GPS,” says McClendon. “If you look at that blue dot on your phone, you’ll often see it drift 50 meters, which puts you on a different block going a different direction on the wrong side of the street.” That’s where Niantic Spatial comes in.

For the last few years, Niantic Spatial has been taking the data collected from players of Pokémon Go and Ingress (Niantic’s previous phone-based AR game, launched in 2013) and building a visual positioning system, technology that tells you where you are based on what you can see. “It turns out that getting Pikachu to realistically run around and getting Coco’s robot to safely and accurately move through the world is actually the same problem,” says John Hanke, CEO of Niantic Spatial.

“Visual positioning is not a very new technology,” says Konrad Wenzel at ESRI, a company that develops digital mapping and geospatial analysis software. “But it’s obvious that the more cameras we have out there, the better it becomes.”

Niantic Spatial has trained its model on 30 billion images captured in urban environments. In particular, the images are clustered around hot spots—places that served as important locations in Niantic’s games that players were encouraged to visit, such as Pokémon battle arenas. “We had a million-plus locations around the world where we can locate you precisely,” says McClendon. “We know where you’re standing within several centimeters of accuracy and, most importantly, where you’re looking.”

The upshot is that for each of those million locations, Niantic Spatial has many thousands of images taken in more or less the same place but from different angles, at different times of day, and in different weather conditions. Each of those images comes with detailed metadata that pinpoints where in space the phone was at the time it captured the image, including which way the phone was facing, which way up it was, whether or not it was moving, how fast and in which direction, and more.

The firm has used this data set to train a model to predict exactly where it is by taking into account what it is looking at—even for locations other than those million hot spots, where good sources of image and location data are scarcer.

In addition to GPS, Coco’s robots, which are fitted with four cameras, will now use this model to try to figure out where they are and where they are headed. The robots’ cameras are hip-height and point in all directions at once, so their viewpoint is a little different from a Pokémon Go player’s, but adapting the data was straightforward, says Rash.

Rival companies use visual positioning systems too. For example, Starship Technologies, a robot delivery firm founded in Estonia in 2014, says its robots use their sensors to build a 3D map of their surroundings, plotting the edges of buildings and the position of streetlights.

But Rash is betting that Niantic Spatial’s tech will give Coco an edge. He claims it will allow his robots to position themselves in the correct pickup spots outside restaurants, making sure they don’t get in anybody’s way, and stop just outside the customer’s door instead of a few steps away, which might have happened in the past.

A Cambrian explosion in robotics

When Niantic Spatial started work on its visual positioning system, the idea was to apply it to augmented reality, says Hanke. “If you are wearing AR glasses and you want the world to lock in to where you’re looking, then you need some method for doing that,” he says. “But now we’re seeing a Cambrian explosion in robotics.”

Some of those robots may need to share spaces with humans—spaces such as construction sites and sidewalks. “If robots are ever going to assimilate into that environment in a way that’s not disruptive for human beings, they’re going to have to have a similar level of spatial understanding,” says Hanke. “We can help robots find exactly where they are when they’ve been jostled and bumped.”

The Coco Robotics partnership is the start. What Niantic Spatial is putting in place, says Hanke, are the first pieces of what he calls a living map: a hyper-detailed virtual simulation of the world that changes as the world changes. As robots from Coco and other firms move about the world, they will provide new sources of map data, feeding into more and more detailed digital replicas of the world.

But the way Hanke and McClendon see it, maps are not only becoming more detailed; they are being used more and more by machines. That shifts what maps are for. Maps have long been used to help people locate themselves in the world. As they moved from 2D to 3D to 4D (think of real-time simulations, such as digital twins), the basic principle hasn’t changed: Points on the map correspond to points in space or time.

And yet maps for machines may need to become more like guidebooks, full of information that humans take for granted. Companies like Niantic Spatial and ESRI want to add descriptions that tell machines what they’re actually looking at, with every object tagged with a list of its properties. “This era is about building useful descriptions of the world for machines to comprehend,” says Hanke. “The data that we have is a great starting point in terms of building up an understanding of how the connective tissue of the world works.”

There is a lot of buzz about world models right now—and Niantic Spatial knows it. LLMs may seem like know-it-alls, but they have very little common sense when it comes to interpreting and interacting with everyday environments. World models aim to fix that. Some firms, such as Google DeepMind and World Labs, are developing models that generate virtual fantasy worlds on the fly, which can then be used as training dojos for AI agents.

Niantic Spatial says it is coming at the problem from a different angle. Push map-making far enough and you’ll end up capturing everything, says McClendon: “I’m very focused on trying to re-create the real world. We’re not there yet, but we want to be there.”